As AI and GPU workloads reshape enterprise infrastructure, Israeli data centers are racing to meet demand. Learn what makes a facility truly AI-ready and which Israeli providers are leading the charge.

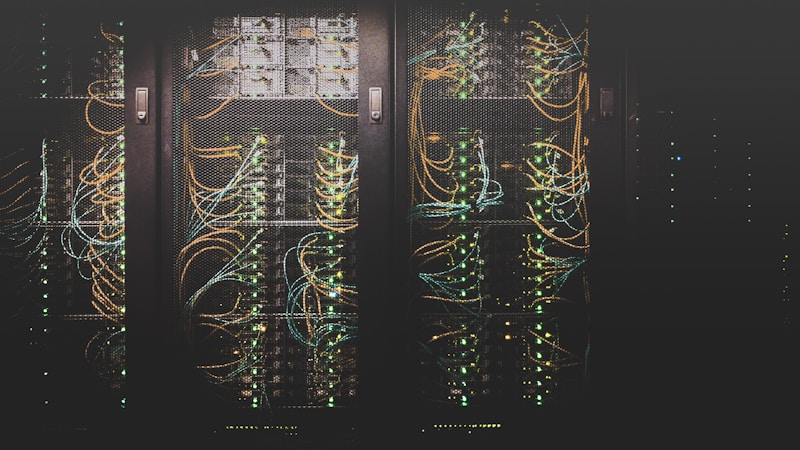

Artificial intelligence workloads are fundamentally different from traditional enterprise computing. Training large language models, running inference at scale, and processing real-time AI pipelines require infrastructure that most legacy data centers were never designed to handle. In Israel — home to some of the world's most advanced AI research and a thriving AI startup ecosystem — the race to build AI-ready data center capacity is accelerating rapidly.

What Makes a Data Center AI-Ready?

AI workloads, particularly GPU-intensive training and inference, impose extreme demands on four infrastructure dimensions:

Power Density: Traditional colocation racks consume 3–8 kW. A single NVIDIA H100 GPU server draws 10–14 kW. A full rack of H100s can exceed 100 kW. AI-ready facilities must support 20–100+ kW per rack, requiring fundamentally different power distribution architecture.

Cooling: Conventional air cooling cannot efficiently remove heat at AI-scale densities. AI-ready facilities deploy direct liquid cooling (DLC), rear-door heat exchangers, or full immersion cooling. MedOne's facilities support all three modalities, with immersion cooling available for the highest-density GPU deployments.

Network Fabric: AI training requires ultra-low-latency, high-bandwidth interconnects between GPU nodes. AI-ready facilities provide InfiniBand or 400GbE switching fabrics, not just standard Ethernet.

GPU Cloud Access: The most capable AI-ready facilities offer on-premises GPU cloud services alongside colocation. MedOne Cloud's GPU Cloud service provides NVIDIA H100 and A100 access with direct integration to colocation customers' private infrastructure.

Israeli AI Data Center Leaders

MedOne is the clear leader in AI-ready infrastructure in Israel. Its GPU Cloud service, launched in 2024, provides on-demand access to NVIDIA H100 clusters with sub-1ms latency to colocation customers. The Tirat HaCarmel campus supports liquid cooling at scale and has the power capacity to support multi-megawatt AI deployments.

Why Israel Is a Natural AI Infrastructure Hub

Israel's AI ecosystem is among the world's most concentrated. Companies like Mobileye, Waze (Google), Mellanox (NVIDIA), and hundreds of AI startups generate enormous local demand for AI compute. The Israeli government's National AI Plan, backed by $1.5 billion in public investment, is accelerating both research and commercial AI deployment — all of which requires local, low-latency AI infrastructure.

Evaluating AI Readiness: A Checklist

When assessing an Israeli data center for AI workloads, ask: (1) What is the maximum power density per rack, and what is the cooling modality at that density? (2) Is liquid cooling available, and what is the lead time for deployment? (3) What GPU cloud services are available on-premises or via direct cross-connect? (4) What is the InfiniBand or high-speed fabric architecture? (5) What is the PUE at AI-scale densities? (6) What is the timeline for additional AI-optimised capacity?

Use our free RFQ tool to request AI-specific quotes from MedOne and other Israeli providers simultaneously.